As part of a “creativity dojo” we’ve had at work, I finally got to implement something I’ve long felt was needed in our QA – a framework for evaluating algorithms’ quality.

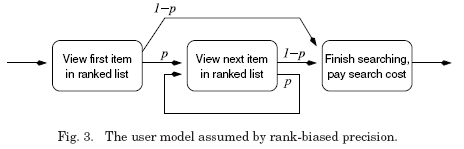

Living on the seam between algorithm development and product management in the past few years, I’ve come to appreciate the need to be able to evaluate not just that it works, but that it works well. A search engine may return results that contain the keywords, but are these the most relevant ones? a recommendation algorithm may return products that are related to the user in some way, but can they be considered “good” recommendations?

During my master’s studies I came to know the work done over at TREC, and was fascinated by the strong emphasis on what we developers often skim over – evaluating results’ quality statistically, and moreover analyzing the evaluation method itself, to ensure that it is sound. So with that approach in mind, I teamed with our talented QA team to create a working framework in 2 days. Here are some lessons and tips learned along the way, that could be useful for others trying to achieve a similar feat:

- Create a generic tool. TREC is mostly about search; however, with some imagination, most AI algorithms can be reduced to similar building blocks. Search, recommendation, classification – all could eventually be reduced to taking an input and returning a ranked list of results, on which the same quality metric can be applied. Code-wise, we used a generic scoring class, with a wrapping interface that has different implementations for different algos to provide the varying context.

- Use large data. This may sound trivial in the academic world, but when you’re in a QA state of mind, you sometimes tend to get used to creating small worlds that are easy to control. Not here. It’s very important to simulate real-life user scenarios by using data that’s similar to production, so we used out integration environment, which replicates from production data.

- Facilitate judging. Obtaining relevance judgments is crucial to getting useful tests. The customer here is a business owner / product manager, who may not appreciate the tedious task of rating results. We created a browser plugin that allows rating from within the actual results page, and accumulates those ratings in a per-test relevance file.

- Measure test staleness. The downside of using non-controlled data is that it moves the carpet from under your feet. Data may change over time and your test may become less relevant. We used Buckley’s Binary Preference (bPref) measure that functions well with incomplete judgments, and also introduced a weighted measure of how many unjudged results are found, to trigger a test failure when results become too unreliable (requiring another judging round).